本次先写代码部分。跳过1,2章节。看兴趣补充吧。

本次code part是要写一个神经转移式依存句法分析。

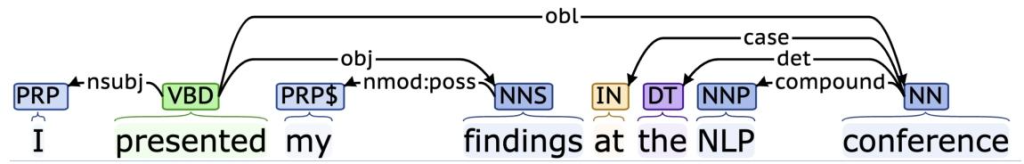

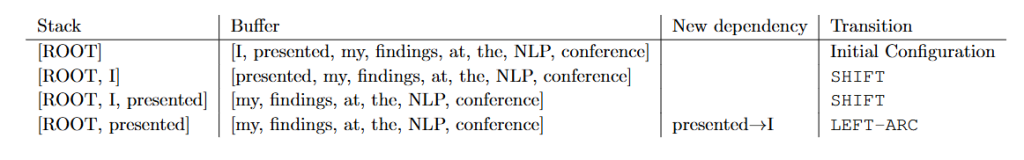

基础概念:依存分析器通过栈、缓冲区和依存列表维护部分分析状态,支持 SHIFT(缓冲区首词入栈)、LEFT-ARC(栈次顶词依存于栈顶词)、RIGHT-ARC(栈顶词依存于次顶词)三种转移操作。

具体子任务

栈:当前正在处理的单词。

缓冲区: 待处理的单词缓冲区。

依存列表: 解析器预测的依赖项列表。

起初,栈中只包含ROOT,依赖列表为空,缓冲区按顺序包含句子的所有单词。在每一步中,解析器都会对部分解析结果应用一个转换,直到缓冲区为空且栈的大小为1。

• SHIFT:从缓冲区中移除第一个单词,并将其压入栈中。

• LEFT-ARC:将栈上的第二个(倒数第二个添加的)项目标记为第一个项目的依存项,并从栈中移除第二个项目,同时将“第一个词→第二个词”的依存关系添加到依存列表中。

• RIGHT-ARC:将栈上的第一个(最近添加的)项目标记为第二个项目的从属项,并从栈中移除第一个项目,同时将第二个词→第一个词的依存关系添加到依存关系列表中。

For example

一个包含n个单词的句子需要2n步,前n步进行shift,n-2n步骤进行SHIFT,LEFT-ARC.

code1 initilize Partial Parse

为每个句子创建一个PartialParse对象。

函数parse_step 接收模型预测的字符串"S""LA""RA",我们需要维护对应的栈,缓冲区,依存关系。

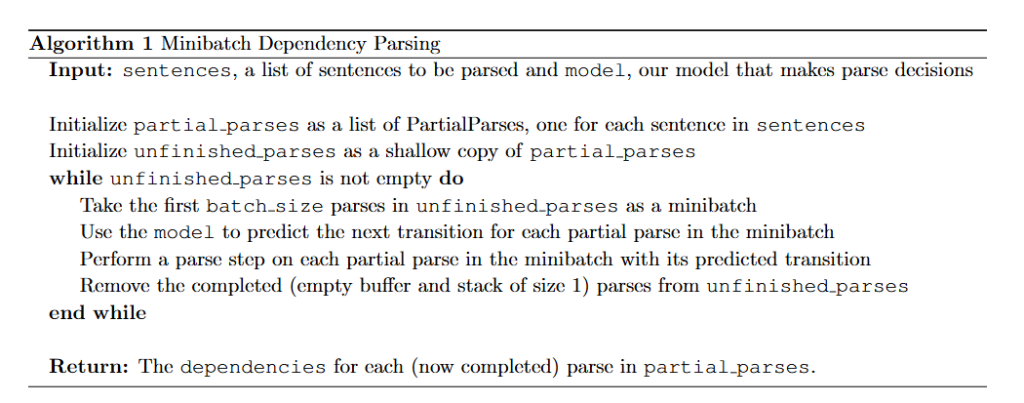

minibatch_parse 函数:

这里最讨厌的是没有给模型预测的接口,也不知道模型预测输出是什么。

class PartialParse(object):

def __init__(self, sentence):

"""Initializes this partial parse.

@param sentence (list of str): The sentence to be parsed as a list of words.

Your code should not modify the sentence.

"""

# The sentence being parsed is kept for bookkeeping purposes. Do NOT alter it in your code.

self.sentence : list[str] = sentence

self.stack : list[str] = ["ROOT"]

self.buffer : list[str] = list(sentence)

self.dependencies : list[tuple[str, str]] = []

def parse_step(self, transition):

"""Performs a single parse step by applying the given transition to this partial parse

@param transition (str): A string that equals "S", "LA", or "RA" representing the shift,

left-arc, and right-arc transitions. You can assume the provided

transition is a legal transition.

"""

### YOUR CODE HERE (~7-12 Lines)

### TODO:

### Implement a single parsing step, i.e. the logic for the following as

### described in the pdf handout:

### 1. Shift

### 2. Left Arc

### 3. Right Arc

if transition == "S":

self.stack.append(self.buffer.pop(0)) # shift the first word in the buffer to the stack

elif transition == "LA":

if len(self.stack) < 2:

raise ValueError("Left arc transition requires at least 2 items on the stack")

self.dependencies.append((self.stack[-1], self.stack[-2]))

self.stack.pop(-2) #remove

elif transition == "RA":

if len(self.stack) < 2:

raise ValueError("Right arc transition requires at least 2 items on the stack")

self.dependencies.append((self.stack[-2], self.stack[-1]))

self.stack.pop(-1)

### END YOUR CODE

def parse(self, transitions):

"""Applies the provided transitions to this PartialParse

@param transitions (list of str): The list of transitions in the order they should be applied

@return dependencies (list of string tuples): The list of dependencies produced when

parsing the sentence. Represented as a list of

tuples where each tuple is of the form (head, dependent).

"""

for transition in transitions:

self.parse_step(transition)

return self.dependencies

def minibatch_parse(sentences, model, batch_size):

"""Parses a list of sentences in minibatches using a model.

@param sentences (list of list of str): A list of sentences to be parsed

(each sentence is a list of words and each word is of type string)

@param model (ParserModel): The model that makes parsing decisions. It is assumed to have a function

model.predict(partial_parses) that takes in a list of PartialParses as input and

returns a list of transitions predicted for each parse. That is, after calling

transitions = model.predict(partial_parses)

transitions[i] will be the next transition to apply to partial_parses[i].

@param batch_size (int): The number of PartialParses to include in each minibatch

@return dependencies (list of dependency lists): A list where each element is the dependencies

list for a parsed sentence. Ordering should be the

same as in sentences (i.e., dependencies[i] should

contain the parse for sentences[i]).

"""

dependencies = []

### YOUR CODE HERE (~8-10 Lines)

### TODO:

### Implement the minibatch parse algorithm. Note that the pseudocode for this algorithm is given in the pdf handout.

###

### Note: A shallow copy (as denoted in the PDF) can be made with the "=" sign in python, e.g.

### unfinished_parses = partial_parses[:].

### Here `unfinished_parses` is a shallow copy of `partial_parses`.

### In Python, a shallow copied list like `unfinished_parses` does not contain new instances

### of the object stored in `partial_parses`. Rather both lists refer to the same objects.

### In our case, `partial_parses` contains a list of partial parses. `unfinished_parses`

### contains references to the same objects. Thus, you should NOT use the `del` operator

### to remove objects from the `unfinished_parses` list. This will free the underlying memory that

### is being accessed by `partial_parses` and may cause your code to crash.

# 初始化部分解析列表:为每个句子创建一个PartialParse对象

# Initialize partial parses as a list of PartialParses, one for each sentence in sentences

partial_parses = [PartialParse(sentence) for sentence in sentences] # 使用列表推导式,为sentences中的每个句子创建一个PartialParse实例

# 初始化未完成的解析列表:作为partial_parses的浅拷贝

# Initialize unfinished parses as a shallow copy of partial parses

unfinished_parses = partial_parses[:] # 使用切片操作创建浅拷贝,两个列表引用相同的PartialParse对象

# 当未完成的解析列表不为空时,继续循环处理

# while unfinished parses is not empty do

while unfinished_parses: # 只要unfinished_parses列表不为空,就继续执行循环

# 从未完成的解析列表中取出前batch_size个解析作为一个小批次

# Take the first batch size parses in unfinished parses as a minibatch

mini_batch_partial_parses = unfinished_parses[:batch_size] # 使用切片获取前batch_size个元素作为小批次

# 使用模型为小批次中的每个部分解析预测下一个转移操作

# Use the model to predict the next transition for each partial parse in the minibatch

transitions = model.predict(mini_batch_partial_parses) # 调用模型的predict方法,返回每个部分解析对应的下一个转移操作列表

# 对小批次中的每个部分解析执行其预测的转移操作

# Perform a parse step on each partial parse in the minibatch with its predicted transition

for partial_parse, transition in zip(mini_batch_partial_parses, transitions): # 使用zip将部分解析和对应的转移操作配对

partial_parse.parse_step(transition) # 对每个部分解析执行一步解析操作(SHIFT、LEFT-ARC或RIGHT-ARC)

# 从未完成的解析列表中移除已完成的解析(缓冲区为空且栈大小为1)

# Remove the completed (empty buffer and stack of size 1) parses from unfinished parses

new_unfinished_parses = [] # 创建一个新的空列表用于存储未完成的解析

for pp in unfinished_parses: # 遍历当前未完成的解析列表中的每个部分解析

if len(pp.buffer) > 0 or len(pp.stack) > 1: # 如果缓冲区不为空或栈大小大于1,说明解析尚未完成

new_unfinished_parses.append(pp) # 将该部分解析添加到新的未完成列表中

unfinished_parses = new_unfinished_parses # 更新未完成的解析列表,移除已完成的解析

# 为每个句子返回依赖关系列表

# Return dependencies for each sentence

for pp in partial_parses: # 遍历所有部分解析(包括已完成的)

dependencies.append(pp.dependencies) # 将每个部分解析的依赖关系列表添加到结果列表中

### END YOUR CODE

return dependenciescode2 model

我们现在要训练一个神经网络,使其能够在给定栈、缓冲区和依赖关系状态的情况下,预测下一步应该应用哪种转换。

首先,模型提取一个代表当前状态的特征向量。我们将使用原始神经依存句法分析论文中提出的特征集:《使用神经网络的快速准确依存句法分析器》。提取这些特征的函数已在utils/parser utils.py中为你实现。该特征向量由一系列标记组成(例如,栈中的最后一个词、缓冲区中的第一个词、栈中倒数第二个词的依存词——如果有的话,等等)。它们可以表示为一个整数列表w=[w1, w2, ..., wm],其中m是特征的数量,每个0 ≤wi<|V|是词汇表中某个标记的索引(|V|是词汇表大小)。然后,我们的网络会为每个词查找一个嵌入,并将它们连接成一个单一的输入向量:

x = [E_{w1} , ..., E_{wm} ] ∈ R^{dm}即对单词进行token化。

模型结构是:

h = ReLU(xW + b1)\\l = hU + b_2 \\ \hat{y} = softmax(l)class ParserModel(nn.Module):

""" Feedforward neural network with an embedding layer and two hidden layers.

The ParserModel will predict which transition should be applied to a

given partial parse configuration.

PyTorch Notes:

- Note that "ParserModel" is a subclass of the "nn.Module" class. In PyTorch all neural networks

are a subclass of this "nn.Module".

- The "__init__" method is where you define all the layers and parameters

(embedding layers, linear layers, dropout layers, etc.).

- "__init__" gets automatically called when you create a new instance of your class, e.g.

when you write "m = ParserModel()".

- Other methods of ParserModel can access variables that have "self." prefix. Thus,

you should add the "self." prefix layers, values, etc. that you want to utilize

in other ParserModel methods.

- For further documentation on "nn.Module" please see https://pytorch.org/docs/stable/nn.html.

"""

def __init__(self, embeddings, n_features=36,

hidden_size=200, n_classes=3, dropout_prob=0.5):

""" Initialize the parser model.

@param embeddings (ndarray): word embeddings (num_words, embedding_size)

@param n_features (int): number of input features

@param hidden_size (int): number of hidden units

@param n_classes (int): number of output classes

@param dropout_prob (float): dropout probability

"""

super(ParserModel, self).__init__()

self.n_features = n_features

self.n_classes = n_classes

self.dropout_prob = dropout_prob

self.embed_size = embeddings.shape[1]

self.hidden_size = hidden_size

self.embeddings = nn.Parameter(torch.tensor(embeddings))

### YOUR CODE HERE (~9-10 Lines)

### TODO:

### 1) Declare `self.embed_to_hidden_weight` and `self.embed_to_hidden_bias` as `nn.Parameter`.

### Initialize weight with the `nn.init.xavier_uniform_` function and bias with `nn.init.uniform_`

### with default parameters.

### 2) Construct `self.dropout` layer.

### 3) Declare `self.hidden_to_logits_weight` and `self.hidden_to_logits_bias` as `nn.Parameter`.

### Initialize weight with the `nn.init.xavier_uniform_` function and bias with `nn.init.uniform_`

### with default parameters.

###

### Note: Trainable variables are declared as `nn.Parameter` which is a commonly used API

### to include a tensor into a computational graph to support updating w.r.t its gradient.

### Here, we use Xavier Uniform Initialization for our Weight initialization.

### It has been shown empirically, that this provides better initial weights

### for training networks than random uniform initialization.

### For more details checkout this great blogpost:

### http://andyljones.tumblr.com/post/110998971763/an-explanation-of-xavier-initialization

###

### Please see the following docs for support:

### nn.Parameter: https://pytorch.org/docs/stable/nn.html#parameters

### Initialization: https://pytorch.org/docs/stable/nn.init.html

### Dropout: https://pytorch.org/docs/stable/nn.html#dropout-layers

###

### See the PDF for hints.

#! Step1

#! 构建一个神经网络,首先使用nn.Parameter声明一个权重矩阵和偏置向量,

#! 然后使用nn.init.xavier_uniform_初始化权重矩阵,使用nn.init.uniform_初始化偏置向量

#? 怎么确定矩阵的行列:1.作用:xW+b 2.输入维度 × 输出维度: 输入是n_features * self.embed_size,输出是hidden_size

self.embed_to_hidden_weight=nn.Parameter(torch.empty(n_features * self.embed_size, hidden_size))

self.embed_to_hidden_bias=nn.Parameter(torch.empty(hidden_size))

#! 初始化权重矩阵和偏置向量

nn.init.xavier_uniform_(self.embed_to_hidden_weight)

nn.init.uniform_(self.embed_to_hidden_bias)

#! Step2

self.dropout=nn.Dropout(p=dropout_prob)

#! Step3

#? 为什么第二层输出是n_classes:因为要预测3种转移操作,所以输出是3

self.hidden_to_logits_weight=nn.Parameter(torch.empty(hidden_size,n_classes))

self.hidden_to_logits_bias=nn.Parameter(torch.empty(n_classes))

#! 初始化权重矩阵和偏置向量

nn.init.xavier_uniform_(self.hidden_to_logits_weight)

nn.init.uniform_(self.hidden_to_logits_bias)

### END YOUR CODE

def embedding_lookup(self, w):

""" Utilize `w` to select embeddings from embedding matrix `self.embeddings`

@param w (Tensor): input tensor of word indices (batch_size, n_features)

@return x (Tensor): tensor of embeddings for words represented in w

(batch_size, n_features * embed_size)

"""

### YOUR CODE HERE (~1-4 Lines)

### TODO:

### 1) For each index `i` in `w`, select `i`th vector from self.embeddings

### 2) Reshape the tensor using `view` function if necessary

###

### Note: All embedding vectors are stacked and stored as a matrix. The model receives

### a list of indices representing a sequence of words, then it calls this lookup

### function to map indices to sequence of embeddings.

###

### This problem aims to test your understanding of embedding lookup,

### so DO NOT use any high level API like nn.Embedding

### (we are asking you to implement that!). Pay attention to tensor shapes

### and reshape if necessary. Make sure you know each tensor's shape before you run the code!

###

### Pytorch has some useful APIs for you, and you can use either one

### in this problem (except nn.Embedding). These docs might be helpful:

### Index select: https://pytorch.org/docs/stable/torch.html#torch.index_select

### Gather: https://pytorch.org/docs/stable/torch.html#torch.gather

### View: https://pytorch.org/docs/stable/tensors.html#torch.Tensor.view

### Flatten: https://pytorch.org/docs/stable/generated/torch.flatten.html

x = None

#! w.view(-1):将索引w展平成一维

#! 依据扩展后的索引从self.embeddings的第0维(行)中选择索引对应的行。

#! (batch_size,n_features)-->(batch_size*n_features,embed_size)

x=torch.index_select(self.embeddings, 0, w.view(-1))

#! (batch_size*n_features,embed_size)-->(batch_size,n_features*embed_size)

#! view 函数:改变张量的形状,第0维:batch_size(保持批次维度)

#! -1 = (总元素数) / w.shape[0] n_features * embed_size

x=x.view(w.shape[0], -1)

### END YOUR CODE

return x

def forward(self, w):

""" Run the model forward.

Note that we will not apply the softmax function here because it is included in the loss function nn.CrossEntropyLoss

PyTorch Notes:

- Every nn.Module object (PyTorch model) has a `forward` function.

- When you apply your nn.Module to an input tensor `w` this function is applied to the tensor.

For example, if you created an instance of your ParserModel and applied it to some `w` as follows,

the `forward` function would called on `w` and the result would be stored in the `output` variable:

model = ParserModel()

output = model(w) # this calls the forward function

- For more details checkout: https://pytorch.org/docs/stable/nn.html#torch.nn.Module.forward

@param w (Tensor): input tensor of tokens (batch_size, n_features)

@return logits (Tensor): tensor of predictions (output after applying the layers of the network)

without applying softmax (batch_size, n_classes)

"""

### YOUR CODE HERE (~3-5 lines)

### TODO:

### Complete the forward computation as described in write-up. In addition, include a dropout layer

### as decleared in `__init__` after ReLU function.

###

### Note: We do not apply the softmax to the logits here, because

### the loss function (torch.nn.CrossEntropyLoss) applies it more efficiently.

###

### Please see the following docs for support:

### Matrix product: https://pytorch.org/docs/stable/torch.html#torch.matmul

### ReLU: https://pytorch.org/docs/stable/nn.html?highlight=relu#torch.nn.functional.relu

logits = None

#! Step1

x=self.embedding_lookup(w)

#! Step2

x=torch.matmul(x, self.embed_to_hidden_weight) + self.embed_to_hidden_bias

#! Step3

x=F.relu(x)

#! Step4

x=self.dropout(x)

#! Step5

x=torch.matmul(x, self.hidden_to_logits_weight) + self.hidden_to_logits_bias

#! Step6

logits=x

### END YOUR CODE

return logits

Comments NOTHING